About

PowerAqua was a multi-ontology-based Question Answering (QA) system, which took as input queries expressed in natural language and was able to return answers drawn from relevant distributed resources on the Semantic Web. In contrast with any other existing natural language front end, PowerAqua was not restricted to a single ontology and therefore provided the first comprehensive attempt at supporting open domain QA on the Semantic Web.

PowerAqua evolved from the earlier AquaLog system, which was a portable ontology-based semantic QA for intranets. PowerAqua extended the capabilities provided by AquaLog to support QA in the open domain of the Semantic Web. PowerAqua was designed to take advantage of the vast amount of heterogeneous semantic data offered by the SW in order to interpret a query, without making any assumptions about the relevant ontologies to a particular query a priori.

The key challenges in order to be able to answer queries in the open SW scenario by dynamically locating and combining information from multiple domains were:

- Locating the ontologies relevant to a query (e.g., “which footballer’s wife is a fashion celebrity?”)

- Identifying semantically sound mappings (e.g., “capital” given by its ancestor “city”)

- Providing complete coverage of a user’s query (often by combining information from multiple ontologies)

We envisioned a scenario where the user had to interact with thousands of ontologies structured according to hundreds of ontologies. PowerMap was the solution adopted by PowerAqua to map user terminology into ontology-compliant terminology, distributed across ontologies, in real time. The approach and component section and related publications provided an overview of the algorithm.

To conclude, PowerAqua balanced the heterogeneous and large-scale semantic data with giving results in real time across ontologies, translating user terminology into distributed semantically sound terminology, so that the concepts shared by assertions taken from different ontologies had the same sense. The goal was to handle queries that required answering not only by consulting a single knowledge source but by combining multiple sources, and even domains.

Approach and components

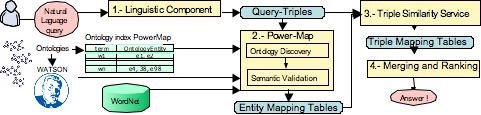

In the first step, the linguistic component analyzed the natural language query and translated it into its linguistic triple form. For example, the query “What are the cities of Spain?” was converted into the linguistic triple (<what-is, cities, Spain>).

In the second step, the Ontology Discovery submodule of PowerMap identified a set of ontologies likely to provide the requested information. It searched for approximate syntactic matches within ontology indexes, using both the linguistic triple terms and lexically related words from WordNet and the ontologies. For example, the term “cities” matched with concepts like “city” and “metropolis.” After identifying possible syntactic mappings, the PowerMap Semantic Filtering submodule checked their validity using a WordNet-based filtering method. This process generated Entity Mapping Tables linking each query term to a set of concepts mapped in different domain ontologies.

In the third step, the Triple Similarity Service module took the Entity Mapping Tables and linguistic triples as input, analyzing the ontology relationships to extract a small set of ontologies that jointly covered the user query. It produced Triple Mapping Tables that related linguistic triples with equivalent ontological triples. These triples were then used to analyze the knowledge bases and generate the final answer.

Finally, since each resultant ontological triple provided only partial answers, the fourth component aimed to merge and rank the various interpretations produced by different ontologies into one complete answer.

Demo

PowerAqua was designed to answer factual queries that could be represented by one or more linguistic triples, where the triple represented an explicit or implicit relationship between two terms. The linguistic triple served as a formal, simplified representation of the natural language query, which could be translated into one or more ontological triples in the same or different ontologies.

The system could handle a wide range of queries, including wh-questions (such as “what,” “who,” “when,” “where,” and “how many”) and imperative commands (like “list,” “give,” or “tell”). These queries were categorized by PowerAqua based on their equivalent intermediate representation. For instance, the query “who are the academics involved in the semantic web?” was represented as <academics, involved, semantic web>, while other queries, like “are there any PhD students in Akt?” were represented as <PhD students, ?, Akt>.

However, PowerAqua was not able to answer certain types of queries, including those requiring temporal or causal reasoning (e.g., “why” questions), queries with negations (e.g., “do not,” “never”), and those that involved ranking, comparatives, superlatives, or percentages (e.g., “the most,” “the best,” “what is the percentage of the population…”). Additionally, queries containing titles or names separated by prepositions needed to be quoted, such as “Who wrote ‘Alicia in Wonderland’?” or “Who works in the ‘University of Zaragoza’?”

Evaluation

Evaluation 1: The Semantic Web community was still far from defining standard evaluation benchmarks. The UAM group, in collaboration with KMi, developed a novel “startup” benchmark to evaluate Semantic Search Systems. PowerAqua was evaluated as part of an information retrieval (IR) system using this new benchmark, which was based on the TREC 9 and TREC 2001 standards. The evaluation used the TREC WT10G collection (10 GB of Web documents), 100 queries based on real user logs, and document judgments for each query. These judgments enabled the calculation of precision and recall metrics. The evaluation aimed to assess both the query results retrieved from the Semantic Web and the advantage of using semantic information for document retrieval.

Evaluation 2: PowerAqua was also evaluated as a standalone system, focusing on its ability to map user queries into ontological triples in real-time using multiple ontologies. This evaluation assessed its mapping capabilities rather than its linguistic coverage or merging and ranking components, which were still limited at the time.

Evaluation 3: PowerAqua’s merging and ranking capabilities were evaluated for queries requiring answers derived from combining multiple facts from the same or different ontologies.

Evaluation 4: Informal experiments were conducted to assess whether answers provided by PowerAqua from DBpedia and other ontologies could be used to perform query expansion and improve the precision of Yahoo web searches. The efficiency of different ranking mechanisms for semantic results was also tested to determine the best method for eliciting accurate web search results.

Evaluation 5: Additional informal experiments were performed with ad-hoc queries to measure PowerAqua’s performance when used as an interface to Watson.

Publications

Lopez, V., Uren, V., Sabou, M. and Motta, E. (2011) Is Question Answering fit for the Semantic Web? A Survey, Journal of Semantic Web – Interoperability, Usability, Applicability, In Press

Lopez, V., Nikolov, A., Sabou, M., Uren, V. and Motta, E. (2010) Scaling up Question-Answering to Linked Data, Knowledge Engineering and Knowledge Management by the Masses (EKAW-2010), Lisboa, Portugal.

Lopez, V., Sabou, M., Uren, V. and Motta, E. (2009) Cross-Ontology Question Answering on the Semantic Web -an initial evaluation, Knowledge Capture Conference, 2009, California.

d’Aquin, M., Sabou, M., Motta, E., Angeletou, S., Gridinoc, L., Lopez, V. and Zablith, F. (2008) What can be done with the Semantic Web? An Overview of Watson-based Applications, 5th Workshop on Semantic Web Applications and Perspectives, SWAP 2008, Rome, Italy.

d’Aquin, M., Lopez, V. and Motta, E. (2008) FABilT – Finding Answers in a Billion Triples, Semantic Web billion Triple Challenge at ISWC.

d’Aquin, M. and Lopez, V. (2008) Finding Answers on the Semantic Web, Nodalities Magazine September / October 2008 issue.

d’Aquin, M., Motta, E., Sabou, M., Angeletou, S., Gridinoc, L., Lopez, V. and Guidi, D. (2008) Towards a New Generation of Semantic Web Applications, IEEE Intelligent Systems, 23, 3, pp. 20-28.

Uren, V., Lei, Y., Lopez, V., Liu, H., Motta, E. and Giordanino, M. (2007) The usability of semantic search tools: a review, Knowledge Engineering Review, 22, 4, pp. 361-377.

Gracia, J., Lopez, V., d’Aquin, M., Sabou, M., Motta, E. and Mena, E. (2007) Solving Semantic Ambiguity to Improve Semantic Web based Ontology Matching, Workshop: Workshop on Ontology Matching at The 6th International Semantic Web Conference and the 2nd Asian Semantic Web Conference.

Lopez, V., Uren, V., Motta, E. and Pasin, M. (2007) AquaLog: An ontology-driven question answering system for organizational semantic intranets, Journal of Web Semantics, 5, 2, pp. 72-105, Elsevier.

Lopez, V. (2007) State of the art on Semantic Question Answering, KMi-Tech Report 2007.

Lopez, V., Sabou, M. and Motta, E. (2006) PowerMap: Mapping the Real Semantic Web on the Fly, International Semantic Web Conference, Georgia, Atlanta.

Sabou, M., Lopez, V. and Motta, E. (2006) Ontology Selection on the Real Semantic Web: How to Cover the Queen’s Birthday Dinner?, EKAW 2006 – 15th International Conference on Knowledge Engineering and Knowledge Management, Podebrady, Czech Republic.

Lopez, V., Motta, E. and Uren, . (2006) PowerAqua:Fishing the Semantic Web, European Semantic Web Conference 2006, Montenegro.

Sabou, M., Lopez, V., Motta, E. and Uren, V. (2006) Ontology Selection: Ontology Evaluation on the Real Semantic Web, Workshop: Evaluation of Ontologies for the Web (EON 2006) at 15th International World Wide Web Conference, Edinburgh.

Lopez, V. and Motta, E. (2005) PowerAqua: An Ontology Question Answering System for the Semantic Web, Workshop: Ontologias y Web Semantiica 2005. Held in conjunction with CAEPIA 2005, Spain.